When you are used to snappy desktops or locally hosted virtual machines and suddenly you need to move to the cloud, you would expect to see similar capabilities for a reasonable price. Unfortunately that was not my experience when it comes to deploying AX 8.1 in Azure cloud. This post is about setting expectations straight for MSDyn365FO developer VM performance hosted in the cloud vs. locally.

First of all, when you deploy your developer VM on the cloud, you have two options. Standard and Premium, which is a reference to what kind of storage will be allocated to your machine. The default size is D13 (8 cores, 56 GB RAM) for regular rotating hard disks, and you need to use the DS-prefixed computers for the flash storage with memory-optimized compute. You can read up about them on these pages in more detail on the Microsoft Docs site:

Premium disk tiers

Virtual machine sizing

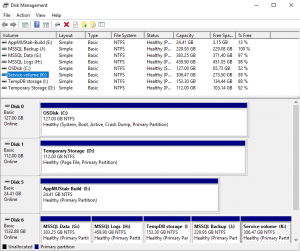

When it comes to premium performance, the Virtual Machine template that Microsoft has built for the LifeCycle Services deployment is using the following structure:

| Purpose | Tier | IOPS | Throughput |

|---|---|---|---|

| Operating System | P10 | 500 | 25 MB/sec (50K reads) |

| Services and Data | P20 | 2300 | 150 MB/sec (64K reads) |

| Temporary Build | P10 | 500 | 25 MB/sec (50K reads) |

The next setting you may choose is the number of disks you would like to stripe together for the Services and Data block. The default number is 3 x 512GB. Striping gives you additional throughput. The more disks you have in the same group, the faster you can read from them.

We have started with the standard template first. The VM got deployed and we have done the initial patching and setup (Windows and Visual Studio updates). It immediately became obvious that stopping and staring the VM causes a lot of grief. Windows starting up, checking for system updates, Windows Defender validating the system, waiting for the background services to come up, opening Visual Studio and waiting for the Team Explorer connection takes very long time.

If you have to go in a meeting, do a lunch break, or for whatever reason you do not want to use the VM there is no “suspend” option where you can turn it off and save some money. Your only option is to fully shut down the VM to get it in a Stopped (deallocated) in which case you do not spend extra money. Then you can wait several minutes to bring the whole system up again.

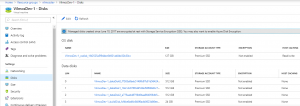

After this I wanted to give Premium a try and see if it is any better. I did not want to lose the settings and the work I have done so far on the VM. Luckily there is a way to resize my D13v2 deployment to DS13v2 and convert the VHD disks from Standard to Premium tier with an Azure Remote Management PowerShell script:

# Name of the resource group that contains the VM

$rgName = 'vilmosdev'

# Name of the your virtual machine

$vmName = 'VilmosDev-1'

# Choose between StandardLRS and PremiumLRS based on your scenario

$storageType = 'Premium_LRS'

# Premium capable size

# Required only if converting storage from standard to premium

$size = 'Standard_DS13_v2'

# Stop and deallocate the VM before changing the size

Stop-AzureRmVM -ResourceGroupName $rgName -Name $vmName -Force

$vm = Get-AzureRmVM -Name $vmName -resourceGroupName $rgName

# Change the VM size to a size that supports premium storage

# Skip this step if converting storage from premium to standard

$vm.HardwareProfile.VmSize = $size

Update-AzureRmVM -VM $vm -ResourceGroupName $rgName

# Get all disks in the resource group of the VM

$vmDisks = Get-AzureRmDisk -ResourceGroupName $rgName

# For disks that belong to the selected VM, convert to premium storage

foreach ($disk in $vmDisks)

{

if ($disk.ManagedBy -eq $vm.Id)

{

$diskUpdateConfig = New-AzureRmDiskUpdateConfig –AccountType $storageType

Update-AzureRmDisk -DiskUpdate $diskUpdateConfig -ResourceGroupName $rgName `

-DiskName $disk.Name

}

}

Start-AzureRmVM -ResourceGroupName $rgName -Name $vmNameThe layout of the converted VM looks slightly different from the Premium LCS template, if you check it in the Azure Portals’ Resource Groups section. But with this you can already squeeze out a little bit more juice and effectively shave some waiting time off.

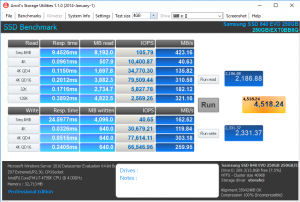

The faster tier already felt better, but it is way off from what we can get on a local deployment. The reason behind is summarized in the table above – you are capped by IOPS and throughput per disk (stripe). If you are doing 4K access on a 500 IOPS SSD, that means your throughput is throttled to 2 MB/sec. Wow!

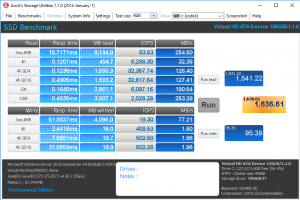

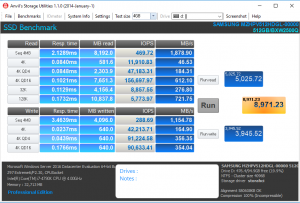

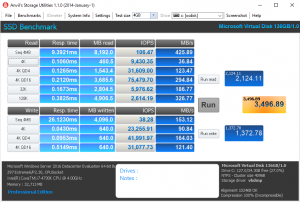

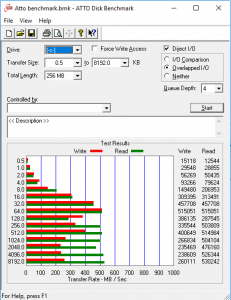

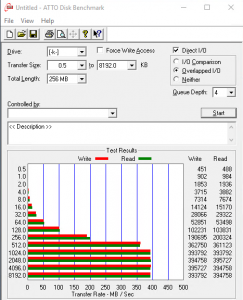

I had to pull out some tools to measure this myself and compare it against a couple of years old desktop-grade workstation with virtualization enabled (8-core i7-4790K with hyperthreading, 32 GB DDR3 RAM, SATA-6gbps HDD and SSD, M.2 key SSD disks).

The throttling is visible on the write speeds for IOPS and throughput very much for Azure, the desktop workstation wins hands down.

The synthetic measurements could also be translated to real-world usage scenarios. When you need to build a larger model, or create the XPath database from several thousand small XML files whenever you want to include changes, every second counts.

Costs-wise a D13v2 Standard tier machine running for 6 hours a day * 22 days * 12 months with an estimated cost of 1,2 GBP per hour it would put the annual price of a developer VM close to £2000. The same with Premium storage (1x 128 GB OS, 3x 512 GB storage) is roughly around 2,1 GBP per hour, putting the total at about £3300 per annum. If you build a decent workstation and acquire the product licenses, the bill stops around the lower price mark.

For now my decision is to stick to your own build that could support 1 or 2 developers per workstation, and have a look at Azure once their price drops considerably, or the throughput multiplies for the same amount. If you have used the cloud for AX development for a couple of months now, please do share your experience with developer VM performance as well.

Cloud SSD:

Local SSD:

Cloud HDD:

Local HDD:

ATTO benchmark comparison for Local vs. Cloud SSD:

Some additional readings on Azure performance:

http://www.instructorbrandon.com/case-of-the-slow-ax-7-azure-dynamics-ax-implementation/

https://engineering.pivotal.io/post/gobonniego_results/

https://www.petri.com/performance-results-of-aggregating-standard-azure-disks

Leave A Comment